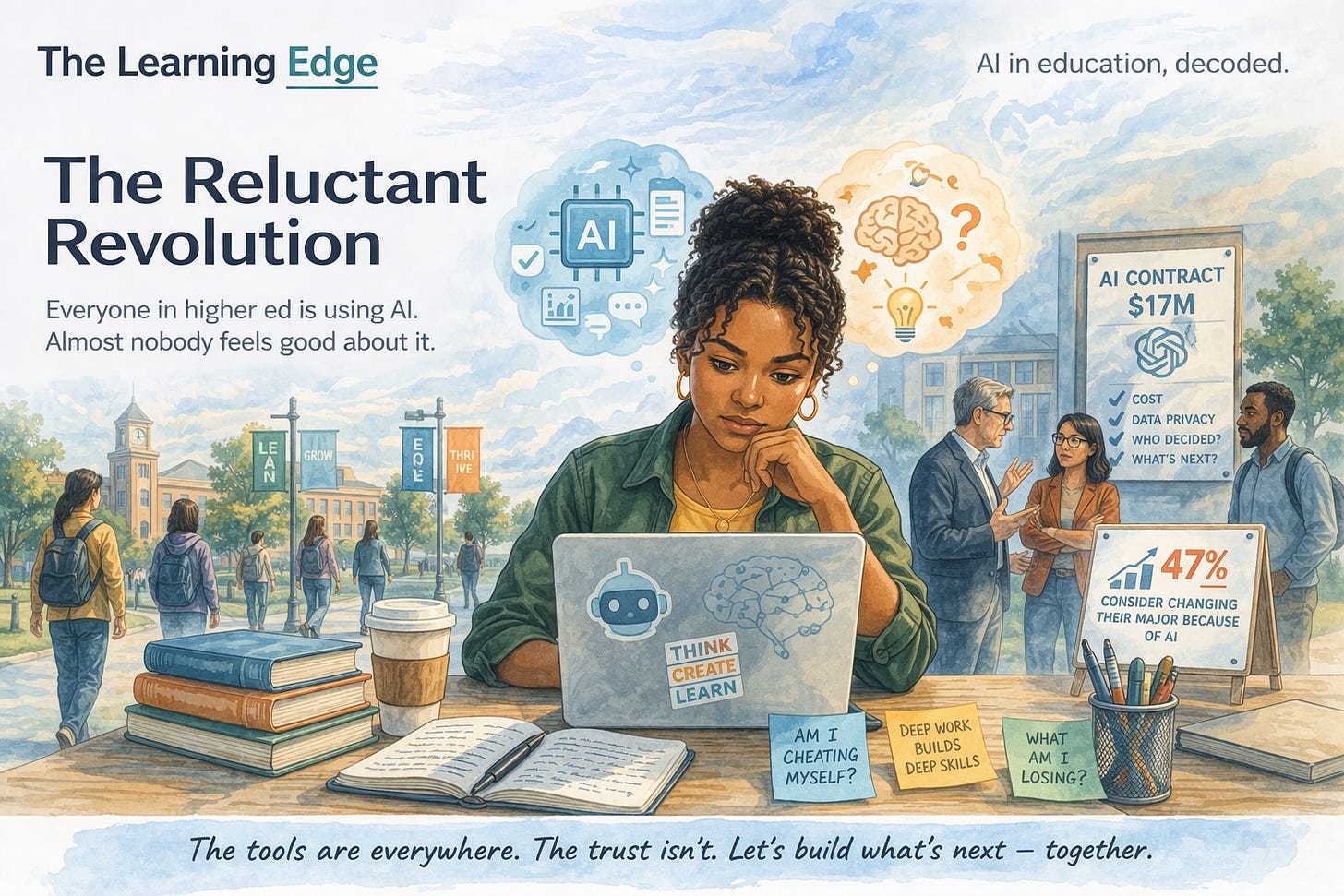

The Reluctant Revolution

Everyone in higher ed is using AI. Almost nobody feels good about it.

The California State University system just released the largest survey on generative AI in higher education. More than 94,000 faculty, staff, and students responded. The headline finding is striking, but not for the reason you might expect.

It’s not that AI use is widespread. We knew that. It’s that the people who use it the most are also the ones most skeptical of its impact on learning.

That tension tells us something important about where education is right now.

The Paradox Nobody’s Solving

A separate RAND survey found that 62 percent of students use AI for homework. In the same study, 67 percent said they worry it’s undermining their ability to think critically.

Read that again. The majority of students using AI believe it might be making them worse at the one thing school is supposed to teach them. And they keep using it anyway.

This isn’t hypocrisy. It’s rational behavior in a broken incentive system. Students face real pressure to be productive, to keep up, to compete with peers who are already using these tools. Opting out feels like bringing a notebook to a laptop exam. The cost of not using AI is immediate and visible. The cost of depending on it is slow and invisible.

EDUCAUSE Review recently gave this a name: “creeping cognitive displacement.” The gradual outsourcing of thinking that happens so slowly, you don’t notice until the skill is gone. It’s not cheating. It’s erosion.

Caught in the Middle

Students aren’t the only ones stuck. Faculty are wrestling with their own version of this paradox.

At Cal State, faculty groups are pushing back against the system’s $17 million OpenAI contract, set for renewal in June. Their concerns go beyond cost. They’re asking who decided this was the right investment, whether student data is adequately protected, and whether a licensing deal with a single AI vendor should be a top institutional priority.

Meanwhile, students report fearing false accusations of AI-generated work even when they haven’t used it. The detection tools don’t work reliably. Everyone knows this. But the policies built around them persist.

The result is an environment where students use AI quietly, faculty distrust it loudly, and administrators sign contracts hoping to stay competitive. Nobody is aligned.

The Anxiety Is Reshaping Everything

Here’s where the story gets bigger. New data shows that 47 percent of students have considered changing their major because of AI. Sixteen percent have actually done it.

That’s not a pedagogical concern. That’s a structural shift. When nearly half your students are rethinking their academic path because of a technology, the conversation has moved well past “how do we integrate AI into assignments?” It’s about what education is for in a world where machines handle an expanding share of cognitive work.

An EdSurge essay this week captured the other side of this tension. A professor explained why she still asks students to write, even though she knows it’s hard and that AI can do it faster. Her argument is simple. The struggle is the learning. Outsource the struggle, and you outsource the growth.

What This Actually Demands

The institutions that navigate this well aren’t the ones with the largest AI contracts or the strictest bans. They’re the ones doing the unglamorous work of building shared understanding.

Honest conversations about what AI replaces and what it can’t. Indiana Wesleyan University built a faculty course refresh institute focused not on tools but on redesigning learning experiences. The emphasis was on helping faculty decide intentionally where AI belongs and where it doesn’t.

Policies that match reality. If students are using AI and detection tools don’t work, then policies built on detection are performative. Better to define what authentic work looks like in specific contexts and design assessments that make AI use a choice, not a cheat code.

Treating student anxiety as a signal, not noise. When 47 percent of students are rethinking their futures because of AI, that’s not a marketing problem. It’s an advising crisis that requires a real institutional response.

The Bottom Line

The Cal State survey didn’t reveal an adoption problem. It revealed a confidence crisis. The tools are everywhere. The trust isn’t. And until institutions close that gap, they’ll keep building AI strategies on a foundation that nobody actually believes in.