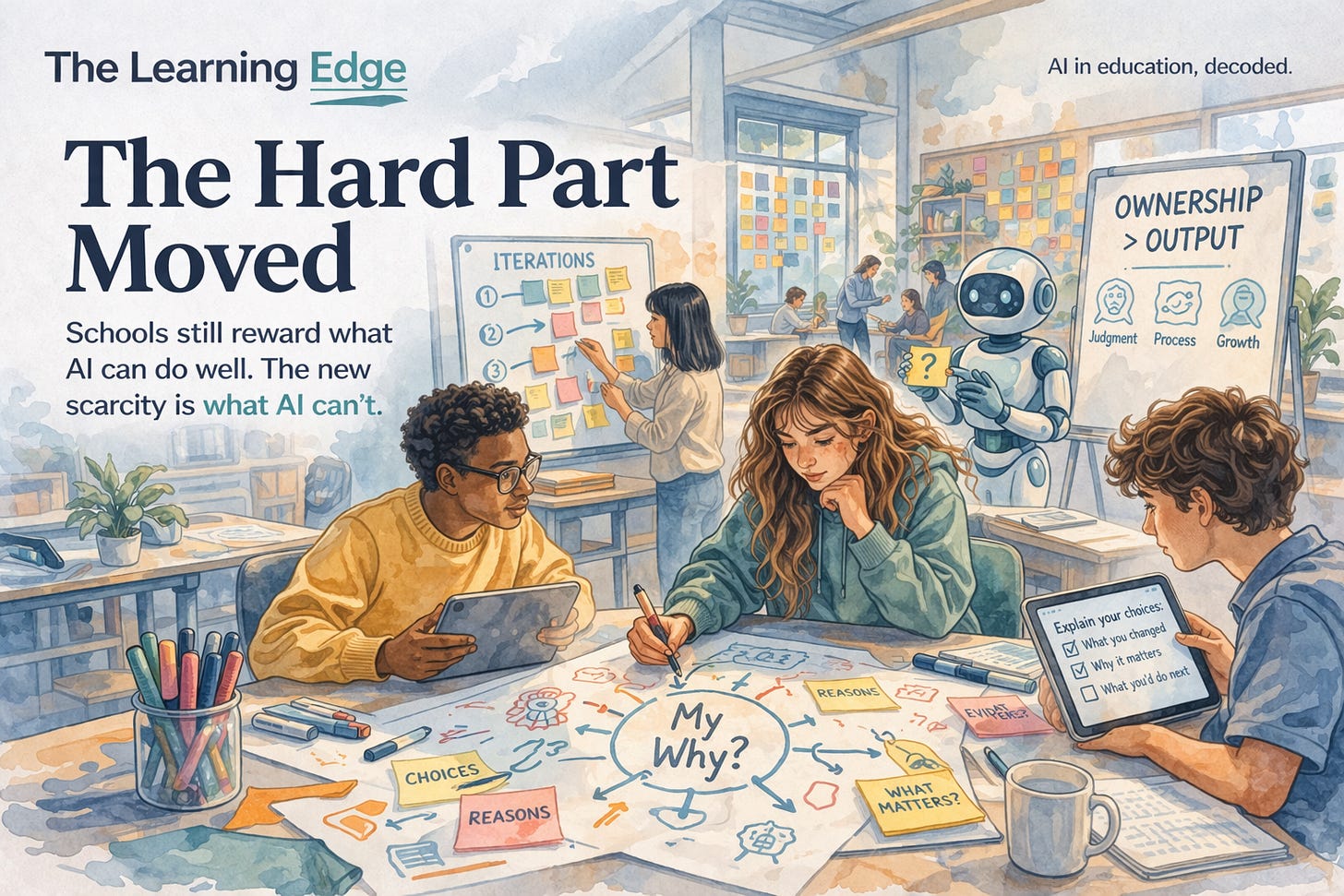

The Hard Part Moved

Schools still reward what AI can do well. The new scarcity is everything AI can’t.

A superintendent in Massachusetts just pointed out something that should rearrange how we think about AI in classrooms. Jeffrey Schoonover argues that Bloom’s Taxonomy is upside down now. The old pyramid put “create” at the top. The hardest thing a student could do was produce something original. Remembering, understanding, applying, analyzing, evaluating, and finally creating. Most teachers have seen that pyramid a hundred times.

Schoonover’s point is simple. When AI can generate a passable essay, a working script, or a polished slide deck in seconds, creation is no longer the summit. It’s the trailhead. The cognitive work has shifted. The hard part moved.

Most institutions have not caught up.

The Faculty Aren’t Resisting. They’re Warning.

A new EDUCAUSE piece based on Augsburg University interviews reports that 88 to 94 percent of faculty worry that generative AI lets students offload cognitive effort and produce polished work without the struggle that learning actually requires.

Read that carefully. That is not a small faction of reluctant holdouts. That is almost everyone.

Too many change-management plans treat faculty skepticism as a communication problem. Send the right memo. Host the right training. Bring the skeptics along. But when nine in ten faculty are telling you the same thing, it stops being resistance. It becomes a signal.

The signal is this: the thing we used to grade, the output, is now the cheapest part of the job. Grading polished work in an AI world is like judging woodworking by whether the shelf is level. Of course it is. The machine did that part.

Students See It Too

Meanwhile, Gen Z is growing more wary of the tools they use every day. A new Gallup poll finds young people increasingly worried that AI designed to speed up tasks will actually make learning harder. That concern is coming from the group with the highest adoption rate on record. 57 percent use AI weekly. And they’re telling us it might be hurting them.

That is not hypocrisy. That is self-awareness. Students can feel the difference between producing something and understanding something. They use the tools because the tools work. They worry about the tools because they know what those shortcuts cost.

USC’s Erika Patall captured this well last week. AI didn’t create a cheating crisis. It exposed a motivation crisis. When the grade is the goal, students rationally use whatever gets them the grade. When learning is the goal, the calculation changes.

Ownership Is the New Output

David Ross, formerly of PBLWorks, frames this in a way I keep coming back to. In AI-rich classrooms, he writes, the scarce resource is not information. It is ownership.

That reframe does a lot of work. It means that a teacher's job is no longer to deliver content. Content is abundant. The job is to help students take intellectual possession of their learning. To care about the thing they are working on. To know why it matters and to defend their choices.

Ownership is what Bloom’s old pyramid assumed but never named. Creation used to require ownership because you couldn’t fake it. Now you can fake the creation and skip the ownership entirely.

So the question becomes: how do you design a classroom where the ownership shows up explicitly?

What This Looks Like in Practice

Grade the judgment, not just the artifact. If a student used AI to draft an essay, the interesting question is what they kept, what they rewrote, and why. A three-minute oral walkthrough of a paper can reveal more than the paper itself. The work product is the starting point. The student’s reasoning about it is the assessment.

Make the thinking visible during the work, not just after. Ross suggests protocols such as an adversarial-interest interview, in which AI pushes students to defend why a topic matters to them. These kinds of tasks force ownership to surface in real time. They also happen to be much harder for AI to fake, because they require a specific student with specific reasons.

Write the AI policy your students actually need. Half of students say their institution has not told them what is expected. That is an institutional failure, not a student one. A short, clear, course-level statement about where AI helps and where it hurts is a minimum baseline. It also invites students to practice judgment, which is the whole point.

Listen to the skepticism instead of managing it. The 90 percent of faculty raising concerns are describing the same design problem administrators are trying to solve. Put them in the same room. Stop framing this as adoption.

The New Pyramid

The old pyramid had creation at the top because producing something original was the hardest cognitive act a student could perform. That assumption held for a hundred years. It doesn’t hold anymore.

The new scarcity is judgment. Knowing what to ask. Knowing what’s worth keeping. Knowing when the machine is wrong. Owning the choice.

If schools keep rewarding the old top of the pyramid, they will keep rewarding what AI does best. That is a losing game for students and for institutions.

The hard part moved. The curriculum needs to move with it.

The Learning Edge publishes twice weekly on the intersection of AI and education. If this resonated, share it with a colleague who’s navigating these questions too.